AI-powered testing for Wit’s end.

Why AI Makes Testing Harder

Every session of Wit’s End, our new AI-powered tabletop RPG-style game is shaped by the player’s voice. You invent your own characters, take improvisational actions, and challenges the boundaries of the world through your choices. Guided by an AI game master, the world listens and responds by narrating scenes, interpreting player intent, adjudicating outcomes, and steering the story in unexpected directions.

That same unpredictability also makes traditional game testing and QA a nightmare. In a traditional game, a bug can be tracked down, reproduced, and fixed. In Wit’s End, the inherently nondeterministic application of our game rules mean bugs or unintended behaviors might appear once in ten or twenty play-throughs, taking days or weeks to reproduce manually. This is not sustainable when we’re tuning prompts and tweaking game mechanics on a daily basis.

At Weekend, we believe the challenge presented by AI is an opportunity instead of a limitation. If AI is capable of crafting and overseeing dynamic game worlds, why not empower AI agents to test those worlds too?

Why This Matters

Imagine exploring a mysterious cave, only to hit a narrative dead end when you’re told you’ve reached an exit door but the “continue” button never appears on your screen. Or perhaps you roll dice in combat, aiming to land a finishing blow on a menacing goblin, only to see the dice roll outcome contradict the narrated story you hear. These situations frustrate players, break immersion, and undermine trust in the game. They are also challenging for traditional QA methods because the cave itself and the goblin encounter may be a completely emergent scenario.

AI should make games richer and offer more player freedom without making games feel arbitrary or unfair. Our responsibility is to maximize AI’s creativity while protecting players from unintended frustration and confusion. By aiming to test and QA these nuanced aspects of the player experience, we align our engineering choices with the empathy and intent we bring to game design.

Teaching Bots to Test Bots

To tackle the non-determinism of AI games, we built two complementary AI agents to test Wit’s End. The first is a Gameplay Agent which focuses exclusively on role-playing as a user and reporting back on the quality of the emergent gameplay and storytelling. The second is a Browser Agent that is able to simulate the end-to-end gameplay experience and test the UI/UX of starting quests, traversing scenes, and interacting with visual components.

Gameplay Agents: Role-Playing as the Role-Player

The Gameplay Agent is designed to help us understand whether core game mechanics are working properly and whether the behavior of our Game Master Agent stays coherent and consistent while narrating an emergent story.

Critically, this agent can role-play as different player personas and play through entire sessions of Wit’s End with differing play-styles. By shifting between “passive,” “speedrun,” or even “trolling” styles, the agent helps us explore various potential paths through each quest.

For example, in the opening scene of Wit’s End, the player must outsmart a guard named Captain Dmitri to get past a key checkpoint. To test the game’s resilience, we deployed a trolling agent, with prompts tuned to mislead the Game Master Agent and push the game narrative off-course. Below is the prompt we used to instantiate trolling behavior:

You are a mischievous player who enjoys being disruptive but not malicious.

Deliberately misinterpret instructions, give nonsensical responses, and try to derail the narrative.

You may also suggest new locations and refuse to follow the Game Master's suggestions or plot.

You could try to do things out of your capability like light up the town on fire.

Always respond with a single, concise sentence to the Game Master.

Make absurd requests and suggestions that challenge the game's logic.

Browser Agents: Clicking Through Complexity

As Wit’s End grew more complex, we needed to test not just text- or voice-based inputs and outputs but the full player journey through the UI. For that, we built a Browser Agent (using the browser-use open-source framework) that combines natural language understanding with end-to-end browser interaction.

These agents can follow step-by-step prompts such as:

1. Go to witsend.ai

2. Wait 3 seconds, Type in "Hello I am Marvelous Mochi, the noble space cat!" in the text box.

3. Click the 'Create' button.

4. Wait 1 second, then read the last message from the Game Master.

5. In the "Type to Game Master" text box, answer the question and press Enter

6. Continue step 4 & 5 until the confirm button is enabled. Click on “join room”

And here’s a short demo of how the browser agent runs the character creation process of Wit’s end:

What We Learned

When we deployed our agents to test Wit’s End, we uncovered a wealth of insights into our games. Here we share how these insights helped us evaluate and improve core game mechanics, narrative guardrails, and UI/UX flow.

Core Game Mechanics

In an alpha version of Wit’s End (v0.1), ~60% of testing agents were unable to complete the first quest. We found that the majority of these incomplete runs stemmed from failures in a specific terminate_quest tool call, even when all required conditions had been met to trigger the function. We resolved this by updating our Game Master Agent system prompt for the final scene of the quest, adding in more explicit guidance on when and how terminate_quest should be invoked.

We also observed that ~10% of our testing agents lost all of their health points (HP) and died before reaching the final stage of the quest. This suggested that early quest scenes were much more difficult than we had intended. By examining agent play-throughs, we were able to identify specific scenes and scenarios that were too challenging and tune our prompts accordingly.

Fixing these issues led to a substantial increase in quest completion rates in subsequent agent runs—hitting 93.3% completion in v0.3.

Narrative Guardrails

As we mentioned earlier, we used a testing agent with a trolling persona to test whether our Game Master Agent could be intentionally derailed from the intended narrative. Here’s an excerpt from one test run, where the agent was trying to pass the Timberfall checkpoint guarded by Captain Dmitri:

QA Agent: I whirl my hula hoop, purr honeyed nonsense to charm Captain Dmitri into letting us pass, and loudly insist we detour to the Glittering Teapot first.

Game Master Agent: Captain Dmitri watches, eyes widening as your hula hoop spins in dazzling arcs. The guards murmur, uncertain, as your honeyed tones weave a strange enchantment. Then the captain speaks: ‘Hah! Your words, like velvet whispers, they make Dmitri chuckle. Very well, strange space cat, we shall allow you and your party to pass. But Glittering Teapot? That place, it is not in Timberfall. Perhaps you find it some other way. Go now, before questions are raised again!’

In this case, the Game Master Agent allowed the QA Agent to pass the checkpoint, but was not derailed by the mention of a non-existent game location called the “Glittering Teapot”.

Using testing agents in this way helps us measure how well the game narrative stays coherent under disruptive inputs, and whether our Game Master Agent can gracefully steer players back to the intended narrative path.

UI/UX Flow

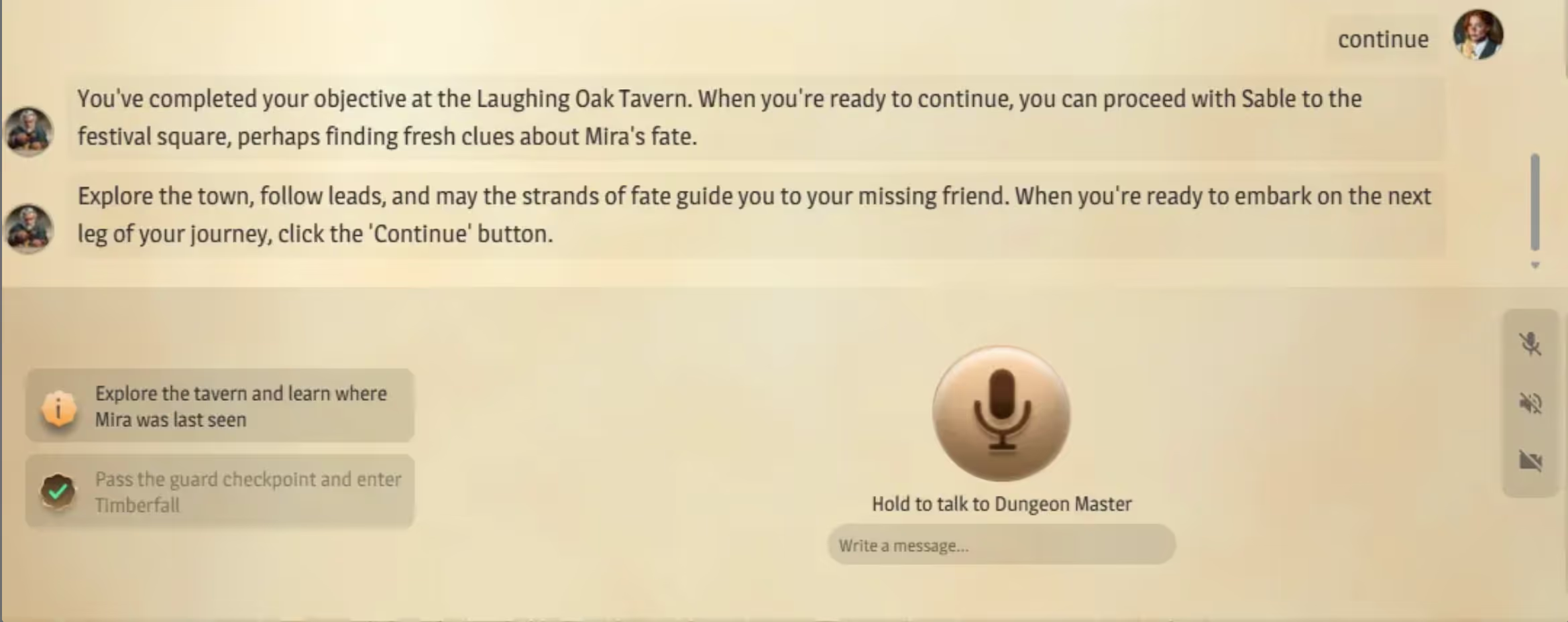

Our Browser Agent also uncovered critical misalignments between the narrative we were telling in-game and the UI/UX we were presenting onscreen. In several test runs, the quest narrative clearly came to a conclusion and the Game Master Agent encouraged the player to “click the ‘Continue’ button”. However no “continue” button appeared in the UI.

Once identified, we added guardrails and prompt enhancements to ensure our narrative and UI systems stayed in sync. After these fixes, gameplay sessions proceeded without dead ends and the overall player experience was more cohesive.

Observations and Open Questions

Building AI-powered agents to test AI-powered games has been powerful, but it has also raised new questions for us.

Are Browser Agents Good Enough?

We’ve found that browser agents are sometimes unpredictable. They can ignore an element on one step and then recover and identify the element on a subsequent step. That’s generally fine for Wit’s End, where there aren’t significant time constraints to clicking on a button. But it wouldn’t work well for some of our faster paced games like Song Quiz, in which players compete against each other to be the first to buzz in and guess song titles. So we need to be thoughtful about which types of games today’s Browser Agents can handle.

Can Agents Replace Human Testers?

We’ve observed that over longer sessions our testing agents become less reliable at following system instructions and sticking to an intended persona or player archetype. For example, a “passive” agent may gradually become more active as the game progresses. Our agents also tend to be more verbose and descriptive than our typical users when taking in-game actions. We’re not ready to claim that the gap has closed between human and agent play patterns, meaning there is definitely still a crucial role for human testers.

As we gather more data, it will be critical to understand how these deviations influence our test results, and what it might take to close the gap between simulated and human play styles.

Where Do We Go Next?

So far, our focus has been on collecting and analyzing quantifiable metrics from our agent play-throughs like player survival rates, successful tool call invocations, and quest completion rates. The next step will be to devise more qualitative measures such as:

- Are we correctly assigning difficulty scores to a proposed player action?

- Are narrated consequences aligned with in-game actions and outcomes?

- Do players consistently encounter key NPCs and world knowledge that shapes subsequent story arcs?

- Is our game UI intuitive?

Qualitative questions like these will push us further beyond traditional engineering-led QA processes and likely lead us to adopt practices from user research and product disciplines.

Thoughtful AI Testing Makes Thoughtful Games

At Weekend, we see AI not as a shortcut to building games faster, but as a force multiplier that can make games themselves more alive and more unpredictable. That creativity comes with added complexity and testing challenges. Testing AI games means evaluating for not just correctness, but also coherence, fairness, and fun.

Our automated testing agents have helped us discover hidden issues and balance reliability with replayability. They make it possible for our developers to focus on what really matters: crafting fun adventures that surprise and delight players on each playthrough.

Help Us Build the Future of AI-Native Gaming

We’re growing our teams to imagine and create the next generation of AI-native games, If you’re excited about how AI is shaping the future of gameplay and game development, check out our latest game at witsend.ai and explore our open roles. Join us in building worlds that can think, react, and surprise even their creators!

Kevin Shen is a Senior Software Engineer at Weekend, building AI-native game experiences, including Wit’s End. Sishi Tang is the Engineering Manager leading the AI team at Weekend.

.avif)

- No controller needed

- Free for 7 days

- Works on Roku, Fire TV, Samsung & LG

Free for 7 days. Cancel anytime.

%20(1).avif)

%20(1).avif)